The most significant outcome of a smart city (and the key indicator) is to provide citizens of the city alternatives and opportunities to lead a better life. This could be in the form of efficient and effective public transportation, proactive traffic monitoring and easing, automated monitoring of utility services, weather management, emergency management, public safety and more importantly an amalgam of these services through correlations. Each of these Smart City services (and please note that the above list is not exhaustive) is data-intensive and results in reams and reams of real-time data, that when leveraged can generate meaningful insights, further driving an enhanced experience for all city stakeholders.

While City agencies and governments worldwide have been spending effort through various initiatives (Ex: Share-PSI) to tap into this data and generate value, they are also limited by the resources (time, money, labor) at their disposal. What if the reams of data generated through the city/government initiatives are made available to private entities and general public, at large. Of course, this needs a careful scrutiny of what data can be shared beyond the boundaries/firewalls of the agencies. However, that should be a small hurdle to overcome considering the immense potential of the data that will be tapped into by these external stakeholders further enhancing the city ecosystem. This needs governments to open up – open up between themselves and open up to external world. This needs Open Government Data.

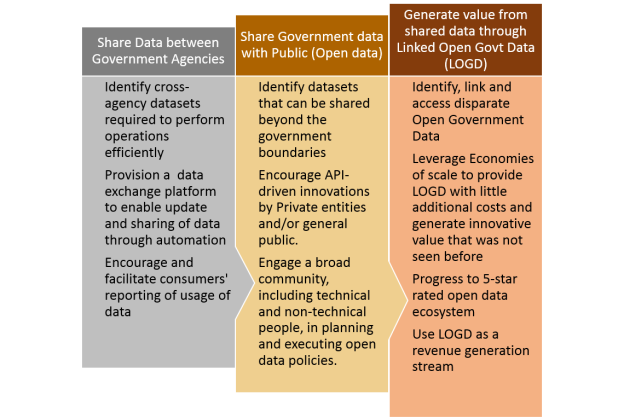

In an earlier blog, I had highlighted how Government data can be used in different contexts – Government to Government (G2G), Government to Business (G2B) and Government to Citizen (G2C). The progression to Open Government Data needs a methodical approach and ideally takes the following transition path.  Each government agency needs to scrutinize its data to identify datasets that is sought by other agencies and identifying non-sensitive datasets that can be opened up between each other. A further level of scrutiny is required to identify the subset of data that can be exposed to non-government city stakeholders (private entities, general public).

Each government agency needs to scrutinize its data to identify datasets that is sought by other agencies and identifying non-sensitive datasets that can be opened up between each other. A further level of scrutiny is required to identify the subset of data that can be exposed to non-government city stakeholders (private entities, general public).

However, not all data that can potentially be opened up will be really helpful. Some of the data may be in a very crude form and will not help the data consumers since they cannot leverage this without extensive effort and investment. For example, scanned (anonymized) application forms are of little value until the data is actually digitized through some OCR mechanism or manually. This discourages the consumer (more specifically, the technical community) to tap into the data even if it is made available. During this era of devops and agile, the idea with Open data is to provision datasets that can be easily tapped into and generate value quickly and with ease. So, how does one identify high value data sets – data that is smart by default?

What does Smart Data mean?

While there cannot be a binary method of identifying Smart data, some very detailed parameters have evolved from the discussions at The Open Group. One such discussion has arrived at the following 9 dimensions of quality that should be applied to data: While these 9 quality parameters are important, one needs to look into the specific business requirement and the corresponding datasets to assign weightage factors to each of these parameters suiting the context. It is also to be noted that each parameter will have further level of detail that has to be studied before declaring it be of high quality. For example, is Credibility defined only by the trustworthiness of sources – what if the data has undergone some transformation in the interim before being made available?

While these 9 quality parameters are important, one needs to look into the specific business requirement and the corresponding datasets to assign weightage factors to each of these parameters suiting the context. It is also to be noted that each parameter will have further level of detail that has to be studied before declaring it be of high quality. For example, is Credibility defined only by the trustworthiness of sources – what if the data has undergone some transformation in the interim before being made available?

Another example – the Processability parameter mentioned above can also be studied further using the 5-star-data definition provided by Tim Berners-Lee.  Most government agencies will have a mix of these different segments of rated data with a heavy leaning towards one-star and two-star data. While one-star and two-star data is fairly easy to generate, this limits data usage on the consumers’ side, when exposed and made available as Open Data. Generally, there are very few consumers willing to invest and/or competent enough to refine the provider data further to make it more consumable. And hence, the uptake of this kind of data will be low. Provider agencies will need to invest in progressing further on the maturity roadmap – make data non-proprietary, add semantics and link to related data/content. More importantly, they should adopt these new methods for all data generated till date and in the future. As a data provider agency progresses on this maturity roadmap, it will start seeing a corresponding adoption and value-generation from the larger city ecosystem. It is to be noted that the progression towards 5-star data will involve a change in organization practices and culture but once that becomes business-as-usual, the effort required is fairly low compared to the uptake one gets to see on the consumer-end.

Most government agencies will have a mix of these different segments of rated data with a heavy leaning towards one-star and two-star data. While one-star and two-star data is fairly easy to generate, this limits data usage on the consumers’ side, when exposed and made available as Open Data. Generally, there are very few consumers willing to invest and/or competent enough to refine the provider data further to make it more consumable. And hence, the uptake of this kind of data will be low. Provider agencies will need to invest in progressing further on the maturity roadmap – make data non-proprietary, add semantics and link to related data/content. More importantly, they should adopt these new methods for all data generated till date and in the future. As a data provider agency progresses on this maturity roadmap, it will start seeing a corresponding adoption and value-generation from the larger city ecosystem. It is to be noted that the progression towards 5-star data will involve a change in organization practices and culture but once that becomes business-as-usual, the effort required is fairly low compared to the uptake one gets to see on the consumer-end.

How can governments be smart?

Most governments worldwide have opened up to the idea of Open data and the ones who have not will only delay but eventually get there. The question is no longer whether government agencies will open their data, it is when and how will they open their data. It requires strategic planning by the governments to execute initiatives of this nature and drive collaborative execution of the same across agencies. Substantial focus on adoption enablement to ensure governance and adherence to standards is essential.

Exchanging data between agencies does not come naturally to most government organizations and when they do share data, they rely on very manual or archaic methods – paper-based, phone requests, email requests etc. Initially, the agencies have to move to an operating model where data is made available on a data exchange platform through a single window (Ex: a portal). Data can be requested and procured through the same window – either in real-time or in batch mode depending on the nature of the request. At minimum, this will ease government operations and make them more effective and efficient. Also, it makes life easy for the citizen so that he/she does not have to share the same data multiple times with different agencies.

This is best implemented by encapsulating the data sharing services as APIs since it can potentially foster further innovation within the government ecosystem.

Once the data has been opened up between agencies, it makes it relatively easy to progress to share the non-sensitive data with non-government stakeholders. The API-approach can be leveraged further to encourage innovation in the digital economy. The next level of progression will be to linked open government data (LOGD) and use this as a revenue stream. LOGD can demonstrate value in a wide range of use cases that were not thought of earlier. As an example, imagine the impact of accessing real-time public transport services data (from the Transportation department) to an event in the city (organized by Tourism department) that links up with the weather data (gathered from Meteorological department) and helps the citizen plan their journey.

The next level of progression will be to linked open government data (LOGD) and use this as a revenue stream. LOGD can demonstrate value in a wide range of use cases that were not thought of earlier. As an example, imagine the impact of accessing real-time public transport services data (from the Transportation department) to an event in the city (organized by Tourism department) that links up with the weather data (gathered from Meteorological department) and helps the citizen plan their journey.

Governments need to take up planned initiatives to tap into the potential of locked up data. The data needs to be pruned and polished to make it more relevant and ease consumption. This data, once tapped into by the city ecosystem, can be applied in daily-life scenarios that impact the community and thereby, deliver a signature city experience. The possibilities are immense. All that is required is to take the initiative and tap into the value of the new natural resource – data. The sooner the better.

Closing thought – Is the Open Government Data story complete once government entities have made data available in 5-star format (the best possible format)? Or would you say the story has only started on a strong footing? There is a lot more to follow…